Complete Guide to Automated Cloud Engineering

Automated cloud engineering is reshaping how businesses manage cloud infrastructure. By using AI and Infrastructure as Code (IaC) tools like Terraform, Pulumi, and AWS CloudFormation, teams can automate provisioning, scaling, and monitoring, reducing manual effort and errors. This approach delivers faster deployments, better cost management, and improved security.

Key takeaways:

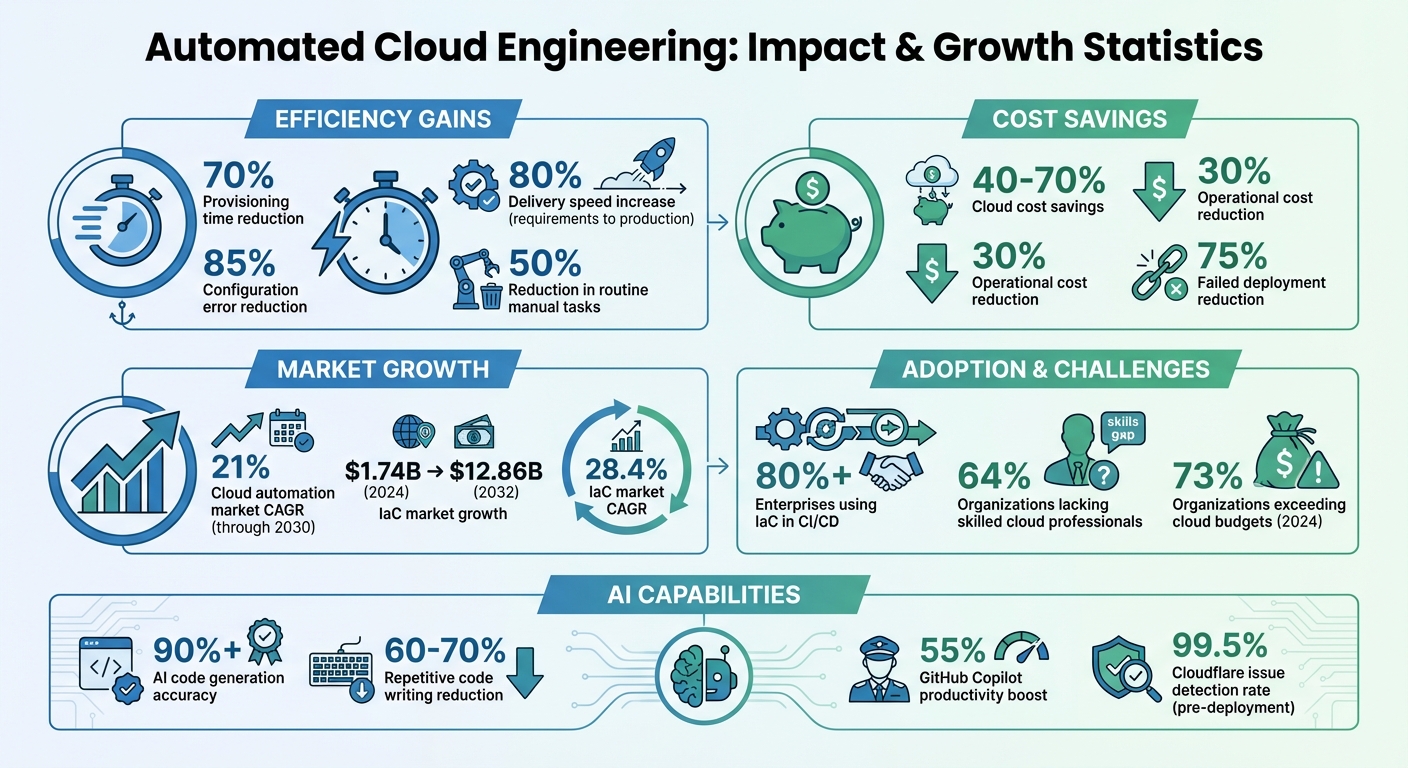

- Efficiency Gains: Automation cuts provisioning time by up to 70% and reduces configuration errors by 85%.

- Cost Savings: AI-driven tools help organizations save 40–70% on cloud costs by optimizing resources and eliminating waste like idle instances.

- Security Improvements: AI identifies vulnerabilities, enforces compliance, and reduces human error, which accounts for 99% of cloud security failures.

- Tool Integration: Popular IaC tools, including Terraform and Pulumi, integrate seamlessly with AI to simplify workflows and maintain consistency across environments.

- AI-Powered Platforms: Solutions like Kanu AI automate the entire delivery cycle, from intent capture to deployment validation, speeding up delivery by 80%.

With the cloud automation market growing at 21% CAGR through 2030, adopting AI-driven workflows is becoming essential for businesses to stay competitive and efficient.

Automated Cloud Engineering: Key Statistics and Benefits

What Happens When a Cloud Engineer Automates Their Entire Job?

sbb-itb-3b7b063

Infrastructure as Code and AI Integration

Infrastructure as Code (IaC) treats cloud infrastructure like software. Instead of manually configuring servers, databases, and networks through dashboards, you define them in version-controlled files. This approach eliminates inconsistencies and ensures that development, staging, and production environments stay identical. By automating these processes, teams can achieve greater efficiency and scalability.

The growth of IaC is undeniable. The global market is projected to expand from $1.74 billion in 2024 to $12.86 billion by 2032, with a 28.4% CAGR. While over 80% of enterprises already integrate IaC into their CI/CD pipelines, 64% of organizations report a lack of skilled cloud professionals to manage it. This skills gap highlights the increasing role of AI in automating IaC workflows.

AI transforms IaC from a labor-intensive task into a streamlined process. It can reduce repetitive code writing by 60–70% while achieving 90%+ accuracy in code generation. For instance, Uber leveraged AI in 2025 to cut configuration errors by 85% across its global operations.

AI also reinforces IaC principles like consistency and repeatability. It analyzes existing infrastructure to enforce naming conventions, tagging standards, and security policies. When manual changes create configuration drift, AI can distinguish between legitimate updates and potential issues, offering automated fixes.

IaC Tools Overview

Several tools dominate the IaC space, each with its strengths:

- Terraform: Uses HCL for defining infrastructure across multiple cloud providers and has a rich module ecosystem.

- Pulumi: Allows infrastructure to be written in general-purpose languages like Python, TypeScript, or Go, enabling teams to cut deployment times by 80–90%.

- AWS CloudFormation: Supports YAML or JSON and integrates seamlessly with AWS services, though it’s limited to Amazon’s ecosystem.

These tools manage "state", which tracks the current infrastructure setup. For example, Terraform requires a backend (like S3 or Google Cloud Storage) to store its state file, while CloudFormation handles state management automatically within AWS. Pulumi provides both managed and self-hosted options. Proper state management is essential - it determines what changes need to be applied when the code is updated.

The choice of tool often depends on team preferences and workflows. Terraform’s HCL appeals to teams seeking a dedicated infrastructure language. Pulumi, on the other hand, attracts software engineers who benefit from programming constructs like loops and functions. CloudFormation is ideal for teams deeply integrated into AWS.

How AI Improves IaC Workflows

AI elevates IaC workflows beyond just speeding up code generation - it changes how engineers interact with infrastructure.

For example, GitHub Copilot’s IaC suggestions can boost productivity by 55%. AI can translate natural language into configuration files, eliminating the need to look up syntax or resource properties. It also analyzes usage patterns to recommend optimal settings.

AI simplifies error handling by translating cryptic error messages into plain language and auto-correcting syntax issues. In late 2025, Cloudflare implemented an AI-powered validation system that identified 99.5% of infrastructure issues before deployment.

"In 2026, the bottleneck isn't typing the code; it's designing the architecture. AI frees you to focus on the what and why, handling the verbose how of the implementation." – AIDevStart Editorial Staff

AI also strengthens security and compliance. It can scan configuration files against frameworks like Open Policy Agent (OPA) to flag vulnerabilities before deployment. For example, Capital One uses an AI-powered system to enforce 200 security controls across its infrastructure code. The system suggests secure defaults, such as enabling encryption, and flags risky configurations like overly permissive security groups.

However, AI isn't perfect. It may sometimes "hallucinate" configurations, suggesting deprecated arguments or invalid resource types. This makes human oversight essential. AI-generated code should never go to production without review, as it might default to insecure settings like 0.0.0.0/0 to ensure functionality. With proper safeguards in place, AI transforms IaC into a strategic exercise, focusing on architectural goals rather than manual coding. This shift sets the stage for fully automated cloud operations.

Kanu AI for Complete Cloud Automation

Kanu AI takes cloud engineering to the next level with its advanced AI-based Infrastructure-as-Code (IaC) workflows, bringing automation to every stage of the delivery cycle. This platform handles everything - from interpreting requirements and deploying solutions to validating performance and fixing issues automatically. You can deploy Kanu AI as a single-tenant service within your AWS VPC via a simple one-click installation through the AWS Marketplace. This setup ensures your infrastructure code and deployment logs stay within your security boundaries.

The platform uses three specialized agents working in a continuous loop to keep things running smoothly:

- Intent Agent: Translates plain-language requirements into an auditable delivery specification.

- DevOps Agent: Generates application code and infrastructure definitions, deploying them into a test environment.

- Q/A Agent: Runs over 250 automated validations on the live system, including endpoint testing, log analysis, and metric verification.

If a deployment fails or an issue is detected during validation, Kanu AI steps in to analyze logs, identify the root cause, update the code or infrastructure, and retry the process. Once all validation checks pass, it opens a pull request for review. This streamlined process speeds up feature delivery by 80%, taking you from requirements to production faster than ever.

Kanu AI operates entirely within your AWS account, adhering to your identity controls, networking policies, and security protocols. With SOC 2 Type II compliance, full audit trails, and instant rollback capabilities, it’s designed with enterprise-level security in mind. The platform uses a usage-based billing model, invoiced monthly, with customers responsible for underlying cloud costs like compute, storage, and model inference.

Kanu AI Features

Kanu AI’s agents are designed to simplify and secure each step of the cloud automation process:

- Intent Agent: This agent learns your team's preferences over time and surfaces tradeoffs to help you make informed decisions. Simply describe what you need in plain language, and it will ask follow-up questions to clarify any ambiguities. The result is an evolving delivery specification tailored to your requirements.

- DevOps Agent: Supporting tools like Terraform, AWS CDK, and CloudFormation, the DevOps Agent integrates seamlessly with your application. It applies changes directly in your cloud account, relying on real deployment signals rather than static templates.

- Q/A Agent: Unlike traditional validation tools, this agent tests against real endpoints and data paths instead of relying on mocked environments. It analyzes logs and metrics to verify proper behavior and feeds any detected issues back into the loop for automatic corrections.

Security is a top priority. Since Kanu AI runs entirely within your AWS VPC, sensitive infrastructure data remains on-premises. Its integration with GitHub and GitLab ensures that no changes reach production without a proper review through pull requests. The system also provides detailed audit trails, making it an excellent choice for organizations with strict compliance requirements.

Kanu AI Pricing and Plans

Kanu AI’s usage-based pricing model is designed to accommodate your deployment scale. You’ll be billed monthly, with charges based on the cloud infrastructure costs (hosting, compute, storage, networking, and model inference) incurred within your AWS account.

| Plan | Access | Features | Governance |

|---|---|---|---|

| Standard Access | Evaluation and trial use | Basic delivery loop, intent capture, code generation, deployment, validation | Terms of Use |

| Enterprise Agreements | Full organizational deployment | Concurrent jobs, SOC 2 Type II compliance, custom security boundaries, dedicated support, multi-cluster support | Master Services Agreement / Order Form |

The Enterprise plan caters to large-scale deployments, supporting multiple concurrent jobs within your account limits. This allows teams to manage several development cycles simultaneously. Enterprise customers also benefit from custom security configurations and dedicated support channels, making the platform an excellent fit for industries with stringent regulations.

All updates and maintenance occur within your VPC, ensuring seamless integration with your existing identity and access management policies.

Automating Cloud Operations with AI

AI has reshaped cloud management by simplifying operations, predicting issues, and enabling real-time adjustments. Its ability to analyze patterns and optimize workflows has revolutionized how teams handle resource provisioning, performance monitoring, and cost efficiency.

Organizations adopting AI-driven automation have reported impressive results: a 60% cut in provisioning times, a 30% drop in operational costs, and a 50% reduction in routine manual tasks.

Automated Resource Provisioning

AI-powered resource provisioning takes the guesswork out of infrastructure deployment. For instance, models like GPT-4 boast over 90% accuracy in generating Infrastructure as Code (IaC) scripts, slashing development time by as much as 70%. Using historical data and organizational insights, context-aware platforms have reduced configuration errors by 85%.

AI also optimizes resources dynamically. It selects the best virtual machine types based on real-time performance and cost metrics. Additionally, it addresses "zombie infrastructure" - like idle EC2 instances or unused S3 buckets - by identifying and remediating these inefficiencies through actions such as stopping, resizing, or tagging resources for deletion. This is particularly critical, as 73% of organizations exceeded their cloud budgets in 2024.

Once resources are efficiently provisioned, the next challenge is ensuring their performance and cost-effectiveness through intelligent monitoring.

Monitoring and Resource Optimization

Monitoring is vital for maintaining smooth operations and controlling costs. Static, threshold-based alerts are a thing of the past. AI-driven monitoring systems now correlate signals across metrics, logs, and traces, distinguishing between genuine issues and normal variations. Here's how AI compares to traditional methods:

| Feature | Traditional Monitoring | AI-Driven Monitoring |

|---|---|---|

| Detection | Static thresholds | Correlation and pattern recognition |

| Identification | Reactive (post-failure) | Predictive (early warnings) |

| Troubleshooting | Manual log/metric triage | Automated root-cause suggestions |

| Scalability | High manual workload | Automated for large-scale systems |

AI systems also use digital twin modeling to simulate cluster states, predicting potential issues before they escalate. When anomalies occur, self-healing infrastructure steps in to resolve problems like failed pods or unbalanced node loads automatically.

For example, Cloudflare’s AI validation pipelines catch 99.5% of configuration and security issues before they even reach deployment, minimizing outages. AI also filters out unnecessary alerts, ensuring engineers are notified only when manual intervention is necessary.

"AIOps is really becoming a requirement to support modern infrastructure." – Scale Computing

On the cost side, AI tools help teams identify waste by translating plain-English queries into optimized SQL for Cost and Usage Reports. Capital One, for instance, enforces 200 automated security controls across its cloud infrastructure, ensuring compliance while fine-tuning resource allocation.

Together, automated provisioning and intelligent monitoring create a feedback loop that continuously improves operations. Shopify, for example, has reached a 90% automation rate in its infrastructure, reserving human involvement for only the most critical changes. This evolution highlights AI’s ability to not just perform tasks but to learn and adapt, driving ongoing improvement in cloud operations.

Implementation Guide for Automated Cloud Engineering

Setup and Deployment Steps

To get started, deploy the AI agent directly within your cloud account. For instance, Kanu AI installs seamlessly from AWS Marketplace into a dedicated VPC within your existing infrastructure. This setup ensures the AI understands your current resources and adheres to your security protocols from day one.

Begin by configuring IAM roles and linking your Git repositories using access tokens. Define your organizational standards - such as naming conventions, tagging policies, and security requirements - in a standards document that the AI will reference. Describe your infrastructure needs in plain English, and the AI will translate them into Infrastructure as Code (using Terraform, CDK, or CloudFormation) and generate the corresponding application code.

Before any code is pushed to your repository, the system performs validations using real endpoints and data paths. This process has proven to significantly reduce deployment times, as seen in a recent implementation where deployment times dropped from hours to minutes thanks to automated validations and fixes. The AI performs over 250 checks, monitors logs and metrics, and automatically updates the infrastructure until all tests are successful. Only then does it push changes to Git, ensuring human review occurs after all checks are passed. This method has been shown to accelerate delivery rates by 80% from requirements to production.

"The gap [between code generation and delivery] is where delivery slows down." – Kanu AI

For secure operations, store Terraform state files in remote storage like AWS S3 or Azure Blob Storage, with state locking enabled to prevent concurrent modifications. Avoid hardcoding credentials by integrating with tools like AWS Secrets Manager, Azure Key Vault, or GitHub Secrets. Stick to gradual rollouts and consistent branch naming to maintain a streamlined process.

Once deployed, focus on systematic validation and continuous improvement to ensure your infrastructure performs reliably and securely.

Validation and Continuous Improvement

After deployment, rigorous validation and ongoing refinements are key to maintaining a reliable infrastructure. This approach ensures that every change meets security, performance, and compliance standards before reaching production.

Validation takes place in multiple stages. Start with basic checks like syntax validation (terraform validate), formatting (terraform fmt), and semantic logic verification. Follow this with security scans using tools like Checkov, tfsec, or TFLint to identify vulnerabilities before deployment. Advanced tests ensure live endpoints respond correctly and data flows as expected.

In January 2026, DevOps Engineer Pravesh Sudha introduced an AI-powered "Infra Guardian" using Terraform, GitHub Actions, and Google Gemini. This system scanned every pull request with Terrascan, automatically rejecting any PRs that lacked an HTTPS listener or included high-risk security issues, while providing actionable remediation suggestions. Similarly, Cloudflare's validation pipeline identifies 99.5% of issues before they reach deployment.

"AI in DevOps is not about replacing engineers - it's about empowering them." – Pravesh Sudha, DevOps Engineer

The pull request workflow plays a critical role in continuous improvement. Human reviewers assess the AI-generated code, make adjustments, and either approve or reject changes. This feedback loop allows the AI to learn from human decisions and adapt to team preferences. For example, Stripe reduced infrastructure provisioning time by 70%, while Uber's context-aware platform cut configuration errors by 85%.

Always test AI-generated code in isolated sandbox environments using temporary credentials before moving to production. Use terraform plan dry runs to preview changes, and incorporate policy-as-code engines like HashiCorp Sentinel to enforce security, naming, and tagging standards. Monitor for configuration drift and run cost estimation checks to avoid unexpected expenses.

Conclusion

Cloud engineering is evolving rapidly, with AI-driven automation reshaping how teams deliver speed and reliability. This transformation allows engineers to shift their focus toward strategy and innovation, rather than being bogged down by repetitive tasks.

The impact of this shift is clear in the numbers: AI-powered automation can reduce provisioning time by 70%, lower failed deployments by 75%, and deliver 40–70% cost savings. As Asif Awan from StackGen aptly put it, "Infrastructure will become an intelligent partner that amplifies human creativity rather than constraining it."[10]

This goes far beyond simple code generation. End-to-end delivery loops now streamline the entire process - capturing intent, building infrastructure, deploying, validating with over 250 checks, and automatically iterating on failures. The result? An 80% faster delivery rate that bridges the gap between requirements and production.

For organizations ready to embrace this change, Kanu AI provides a reliable solution. With features like single-tenant deployment, SOC 2 Type II compliance, and a PR-based workflow, Kanu AI ensures data security while maintaining clear audit trails.

This evolution marks a new era where AI becomes a strategic partner, taking over routine tasks and empowering teams to focus on innovation, strategic goals, and delivering greater value to the business. The future of cloud engineering is here, and it’s smarter, faster, and more collaborative than ever.

FAQs

How do I start automating cloud engineering without breaking production?

To automate cloud engineering effectively and safely, start by testing your scripts and configurations in a staging environment. This approach allows you to identify and fix issues before they impact production systems. Use tools designed to validate settings early in the process to catch errors quickly.

Implement version control to track changes, making it easier to understand and revert modifications if needed. When deploying, take an incremental approach - begin with non-critical systems to reduce potential risks. Monitor these systems closely to ensure everything works as expected.

As confidence builds, you can gradually expand automation efforts. Be sure to include safeguards like rollback options and maintain strong observability to monitor performance and detect anomalies. Following these steps can help you reduce risks and ensure a seamless shift to automation.

What guardrails prevent AI-generated IaC from creating insecure resources?

Guardrails for AI-generated Infrastructure as Code (IaC) focus on security scanning, policy enforcement, and compliance checks. Incorporating IaC security scans into CI/CD pipelines is crucial for identifying misconfigurations early, long before deployment. Advanced tools now provide context-aware detection, which helps cut down on false positives and delivers more accurate remediation steps. When paired with automated validation and strict adherence to established best practices, these safeguards ensure AI-generated IaC remains secure while reducing the chances of creating vulnerable resources.

How do I measure ROI from automated provisioning, monitoring, and cost optimization?

Measuring ROI starts with tracking essential metrics like lowered cloud costs, eliminated waste (think unused resources), and better resource utilization. To put the savings into perspective, compare your expenses before and after implementing automation.

Don’t stop there - keep an eye on how much manual effort and time is saved on routine tasks. This gives you a clear picture of how automation impacts operational efficiency. By consistently monitoring these areas, you can effectively gauge both the financial and operational benefits of your automation efforts.